Part 1: Blockchain Scaling | Consensus, Sharding, and Data Availability

Notley Research | Blockchains and Crypto

Welcome! This is lesson three of our deep dive course AND part one of our mini-series on Blockchain Scaling. If you haven’t had a chance to read parts one and two of our deep dive course, I highly recommend you do so before diving into this post! Several components of this article will assume an understanding of topics discussed in previous weeks.

If you want to keep up with the rest of our research, hit that subscribe button!

Blockchain Scaling — What’s the big deal?

To become an effective global payment system, a blockchain network must at least compete with current products on the market. Sadly, with Bitcoin and Ethereum currently capped at an average of 7 and 15 transactions per second (TPS) – compared to Visa and Mastercard’s 1,700 and 2,150 – it’s clear blockchains are still a long way away from achieving this goal. As such, this week, we’ll dive into Part 1 of what’s proven to be blockchain’s “kryptonite” to date: Scalability.

Within a blockchain, the main goal of scalability is to increase transaction speed (how quickly a transaction is finalized) and transaction throughput (how many transactions can process simultaneously) without sacrificing the security or decentralization of the chain.1 This issue has proven incredibly difficult and is now referred to by the industry as “The Blockchain Trilemma.”

The Blockchain Trilemma

The Blockchain Trilemma is a term coined by Ethereum co-founder, Vitalik Buterin, to describe a set of three main issues developers must consider when building blockchains. Unfortunately, developers are often forced to sacrifice one ‘aspect’ for the sake of the other two.

Decentralization: Rather than being managed by a single entity, blockchains distribute control over the network to a large number of independent participants. Additionally, a truly decentralized blockchain should allow any interested participant to “join” the chain, propose their own transactions, and view the chain’s inner workings without relying on a third party. Disadvantages of decentralization include the need for redundant, less efficient systems2 and the exponentially increasing difficulty of node-to-node communication as the network grows.

Security: Blockchain networks should easily prevent malicious entities from adjusting transactions or stealing value on the chain. Additionally, blockchains should provide sufficient incentives to the entities which secure the chain.

Scalability: Blockchains should support quick transaction finality and a large TPS without an equivalent increase in fees. This is easy when a single entity controls the ledger but exponentially more difficult as more nodes join the network.

To understand why this has proven difficult, let’s run through an example of a potential scaling solution and its consequences. Looking at the Proof of Work consensus model, we find a decentralized, secure system that cannot scale. Why can’t it scale?

First, remember PoW requires miners to spend time and electricity to create a block. The combined computational power spending this time and energy is known as the network hash rate.

Second, recognize that as the PoW network’s hash rate increases, the computational power needed to create a block also increases.

This leaves us with a system that, regardless of the computational power it commands, will always produce roughly the same number of blocks in a given time period. In other words, if it’s set to produce a block every 10 minutes, it will always produce a block roughly every 10 minutes. Furthermore, remember, blocks are not magic — they are simply data structures with finite storage space (or computation cycles depending on the chain). As a result, we only have one immediately clear method of scaling a PoW system — increasing the size of a block (or the data it can store).

What are the consequences of this? Well, on one hand, increasing the block size allows the system to store more transactions in each block. And what does more transactions in a block mean? Higher transaction throughput! Woohoo!

But wait, it’s not quite that easy… Another consequence of larger block sizes is a larger blockchain. Remember, blockchains are effectively large databases, and each mining node in a PoW system must download the entire database to participate in the network. Can you take a guess what adverse effect a larger, more memory-intensive blockchain may cause? That’s right, it sets a higher bar to run a node, leading to fewer nodes and increased centralization. This idea was discussed heavily during Bitcoin’s Block Wars.

With that out of the way, let’s take a closer look at the methods developers are currently building to solve the Blockchain Trilemma. Blockchains can implement two core scaling methods: on-chain scaling and off-chain scaling.

On-Chain Scaling

On-chain scaling entails upgrading or optimizing the core blockchain, known as Layer 1. Layer 1 optimization takes two primary forms: adjustments to core consensus algorithms and adjustments to the chain processing logic. We’ll explore consensus model adjustments first. The most commonly used common alternative consensus mechanism is Proof of Stake (PoS).

Proof of Stake

Proof of Stake (PoS) is a consensus model designed to reduce the environmental concerns around blockchain security.3 In simple terms, Proof of Stake models choose the creator of a block based on the percentage of that creator’s stake in the network. Think of it like a lottery — there may be 1,000,000 tickets, but only one will win!

Within the system, users who wish to participate in block creation must lock – or stake – a certain amount of coins. Based on this stake, the system uses a pseudo-random selection process to determine which nodes will participate in block creation and validation. In most systems, the stakes’ size, and other factors such as time staked and blocks produced, affect the chances of being selected as the next block producer. Let’s look at an example.

Imagine we have a PoS network with 1,000,000 total coins, half of which are staked, and the other half of which aren’t. The staked coins are controlled by 1,000 different nodes, with the largest node holding 100,000 coins and the smallest holding 5. Based on these amounts, the largest node will be chosen for block production roughly 20% of the time (owns 100,000 out of the 500,000 staked coins), while the smallest node will be selected for block production roughly 0.001% of the time (owns 5 of the 500,000 staked coins). Worth noting, the total supply of the coin is irrelevant to PoS consensus. Instead, only staked coins are considered when determining what node will create the next block.

Before going any further, remember from our last lesson that a block producer could attempt one of three things:

They could create a valid block and attempt to append it to the real chain.

They could create a valid block but attempt to append it to a chain with altered or fake transactions.

Create an invalid block that houses invalid transactions.

To ensure every block producer only attempts the first option, all the active nodes within the network have the opportunity to vote on 1. Whether the block is valid, and 2. To which chain the block should be added. Assuming the block receives enough votes to satisfy the consensus algorithm, the block is considered valid, and the node that produced the block receives payment for its service (generally through fees and/or a block reward).

Great! Now we have “honest” block producers. Well, what about the nodes voting to keep them honest?

A Proof of Stake system incentivizes nodes to vote honestly through a form of financial game theory. When nodes stake coins to the network, those coins are sent to a smart contract, which holds the coins as collateral in escrow. The smart contract then sets up “rules” the node must follow, which form the basis of the consensus algorithm. An example of a rule could be, “A node can only vote on a block’s validity once.” When a node breaks one of these rules, the smart contract destroys part or all of the node’s stake. This results in a situation where a vote for an invalid transaction, an invalid block, or even random downtime can have a hard financial consequence.

Some Proof of Stake systems, such as Ethereum’s Casper FFG, also make noticeable changes to the generalized block proposals seen in other consensus mechanisms. It’s a little too technically complex to dive into here, but if you’d like to learn more, I recommend reading the Casper FFG whitepaper.

In summary, Proof of Stake (PoS) consensus algorithms:

Require potential nodes to “stake” coins to the network, forcing them to hold a financial incentive in the chain itself.

For each block, one random staked coin is chosen. Whichever node holds said coin receives the right to create the block.

Once the block is created, the rest of the active nodes vote on the validity of the block. If the block receives enough votes, it is accepted by the network.

All actors in this process are incentivized to act honestly. If they do not, they risk losing the entirety of their “stake” in the network.

Proof of Stake is only one of many alternative consensus models. Other popular consensus models include Delegated Proof of Stake, Proof of History, Proof of Coverage, and a variety of others. Now that we understand how consensus can be adjusted to increase scalability let’s look at chain architecture and processing logic adjustments.

Chain Architecture

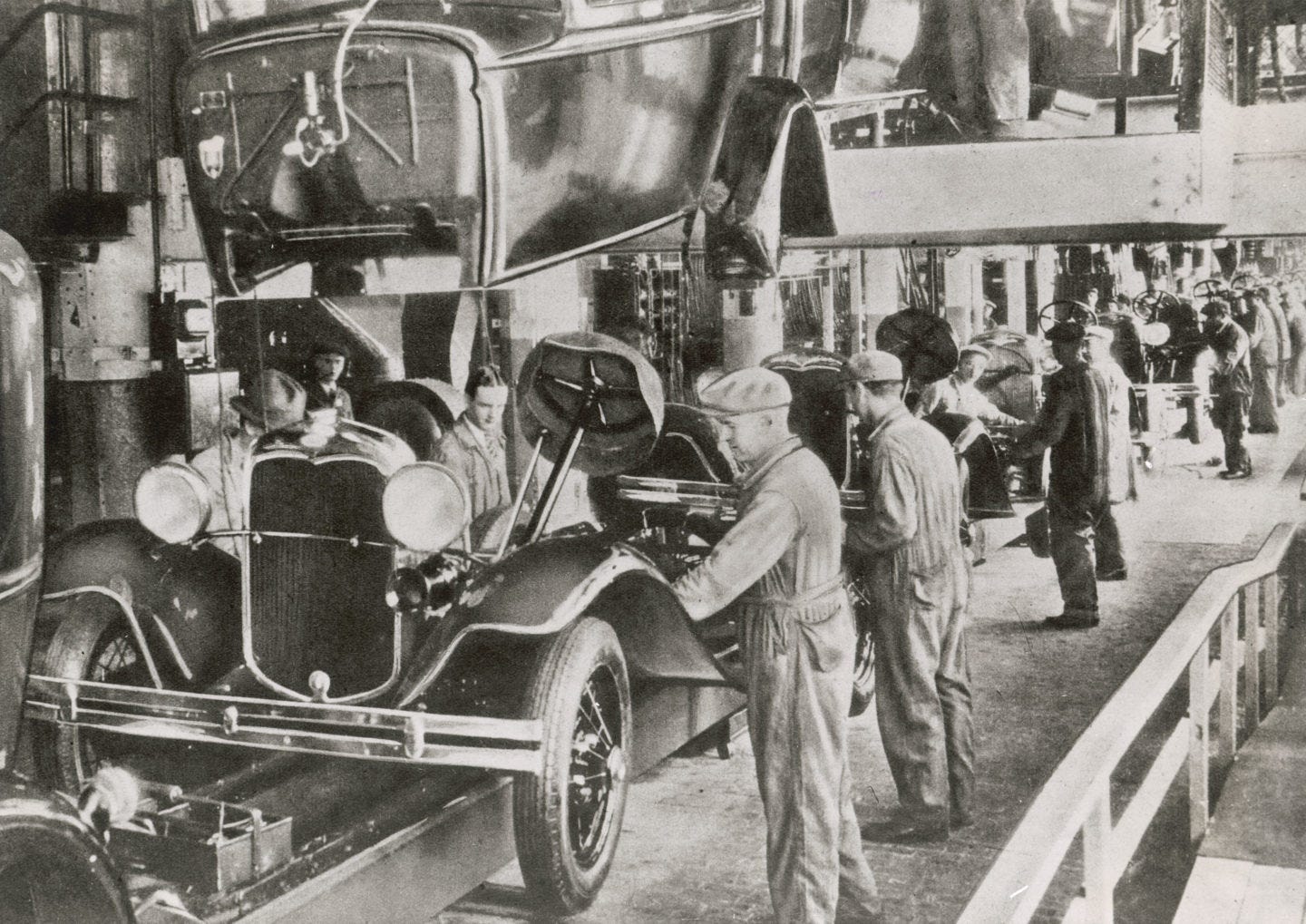

As a result of the many different ways a blockchain can be built, there are different forms of transaction processing within each chain. Some chains, like Bitcoin, use a monolithic architecture, which means a full node participates in every part of the blockchain: transaction creation, consensus, execution, etc. Other chains opt for a modular structure, which spits those responsibilities across different parties in the network. (kind of like an assembly line!)

For example, in a modular architecture, some nodes may focus on consensus while others focus on transaction processing. This separation of responsibilities allows for several potential scalability benefits if it can be implemented in a secure and decentralized fashion. One of the most common forms of modularization is known as Sharding.

Sharding

Sharding is a concept pulled from generalized database management where you break the database into smaller pieces (shards), with each piece housing a specific type of data. This process allows you to store and query the data in a more efficient manner while also simplifying database management. Within blockchains, sharding offers a similar benefit: it allows for more efficient data storage by breaking the chain into a network of Shard Chains. Let’s explore how this works.

In most blockchains, a full node stores the entire state of the blockchain, including account balances, data, and smart contract code. As a result, nodes in the network are forced to store and process every transaction. Within a sharded blockchain, this isn’t the case. Instead, the responsibility to process or store any given transaction is randomly assigned to a subset of nodes, known as a committee. This committee works to achieve consensus on a specific “shard” of data or transactions. One commonly proposed method of sharding is known as “state sharding.” In simple terms, state sharding allows nodes to store only a part of the total “state” (remember, a blockchain state is basically a snapshot of the account balances on the chain at that time) instead of the entire state. Confused? Let’s dive into an example to better understand.

Imagine you have a non-sharded blockchain with 1,000 accounts, 100 nodes, and a total size of 10GB. Furthermore, imagine the 76th account on the chain would like to send the 100th account $500 as payment for a service. To ensure this is a valid transaction, each node must track and store every single transaction that occurs on the blockchain. This allows each node to check Account 76’s transaction history and be positive that it has a balance of more than $500. After each node completes this process, the nodes come to consensus that the transaction is valid, and then the transaction if finalized. This process requires that all 100 nodes to:

Download and maintain the full 10GB state of the blockchain

2. Validate the transaction is legitimate

3. Process the new block in which the transaction is stored.

Now, imagine we have the same blockchain, comprised of five shards, each maintained by twenty nodes. The shards within the blockchain are set such that Shard One maintains the state of accounts 1-200, Shard Two maintains the state of accounts 201-400, Shard Three maintains the state of accounts 401-600, and so on. In this scenario, Shard One would be responsible for checking Account 76’s transaction history and validating that the transaction is legitimate. Assuming the nodes maintaining Shard One form consensus on the transaction’s legitimacy, the transaction occurs. This process requires:

Each node only downloads 1/5th of the full 10GB state of the blockchain.

Only nodes 1 through 20 (Shard One) must validate the transaction, leaving all other nodes open to validate their own transactions.

All nodes process the new block in which the transaction is stored.

To summarize, the sharded blockchain in this example:

Reduces the memory needed to run a node by 80%. This increases decentralization by giving less memory-intensive devices, like phones or laptops, the ability to run a full node.

Can potentially validate transactions at 5x the speed of the non-sharded blockchain since validating any given transaction only requires ⅕ of the nodes instead of all the nodes.

Now you might be thinking, “This is ridiculous; using ⅕ of the nodes obviously reduces security.” If that were the end of the story, you’d be right. Luckily (or unluckily, depending on how you look at it), there is quite a bit of additional complexity we have yet to discuss. But before we get into that, we need to take a quick pitstop and learn about the Data Availability Problem. This will be relevant throughout several of our lessons – So pay attention!

Data Availability Problem

To understand data availability, you first must recognize three relevant aspects of a blockchain. First, remember blocks are data storage structures with limited capacity. Second, remember each block is created by a node, which was chosen by the consensus mechanism used by its chain. Finally, recognize that a chain’s consensus mechanism produces new blocks on a consistent cadence (BTC produces a block roughly every 10 minutes, and ETH 2.0 produces a block every 12 seconds). Importantly, this cadence means that regardless of whether a block holds its maximum number of transactions, it will be published once the next block is proposed. In other words, it's common for blocks to have “empty space” or be partially filled when published. With that context, let’s dive into data availability.

The Data Availability Problem basically states the following: “How can nodes ensure all data in a proposed block (or a proposed state) was made available?” Said another way, if we aren’t downloading the entire data structure, how can we be positive there isn’t anything hiding within it?

To understand why this is important, imagine we have a sharded chain with blocks that can hold up to 100 transactions. Furthermore, imagine the next block has 50 valid transactions to be published. In this scenario, if a dishonest block producer manages to take control of a single shard, it could:

Use the corrupted shard to add ten invalid transactions to the block, hiding those transactions from the rest of the network.

Propose the block and tell all other nodes that the block only has the original 50 valid transactions.

Have the block with invalid transactions accepted by the network since none of the other shards can see the malicious activity within the corrupted shard.

In short, the Data Availability Problem means nodes need to ensure that transactions in a block are valid AND that all the data in that block is available to the network. If even a small portion of data is hidden or unavailable, it could result in an invalid transaction with catastrophic consequences. If you’d like a more technical explanation of how blockchains allow block proposers to hold back or hide data, check out the first few paragraphs of this piece (up to “A Partial Problem Statment”).

Now that’s covered, I recommend you lace up because we’re about to get technical. If you’d prefer to skip this, I’ll put a TL;DR at the bottom of this section. If not, read on.

Secure Sharding

Sharding uses several different mechanisms to provide secure scalability. I’m going to describe the entire process in a linear fashion but be aware, some of this is a little outdated.4 Regardless, it’s a good view of history, and I think the linear format helps with comprehension. First, let’s look at Randomly Sampled Committees (RSCs).

In short, Randomly Sampled Committees are the node networks that make up each shard. They operate just as they sound – nodes are randomly selected from the total node pool to form a committee within each shard. Furthermore, committees are constantly shuffled, adjusted, and remade. As a result, it becomes effectively impossible for a node to determine the shard in which it will participate until after the placement occurs. The goal of this process is to decrease the chances of collusion between nodes within a specific shard. Of course, it’s also possible that an attacker with sufficient network power could get lucky and have a large portion of its nodes assigned to a specific shard. Theoretically, this would allow them to override the honest nodes in a shard and spoof the “state” of that specific shard. This would invalidate the entire blockchain (as the blockchain state is simply a sum of its shards) and bring us back to square one, where every node must download the entire chain state.

So how do we solve this? The answer lies in Data Availability Sampling (DAS) and Erasure Coding.

To understand DAS, let’s look at our example from earlier. Within our sharded blockchain, the nodes in Shard One maintain ⅕ of the total state of the blockchain while assuming the other ⅘ of the chain is acting honestly. We now know we can’t make that assumption – so what can we do?

Imagine we operate Node 10 of the sharded blockchain in our previous example. Let’s also imagine Node 10 is a part of Shard One, which maintains the state of accounts 1-200 (or ⅕ of the total chain). To ensure that every other shard is acting honestly, we decide to take a tiny sample of the state of shards two through five. For this example, let’s say we take 1/50th of each. If we are the only node sampling these other shards, it’s unlikely we’d find anything wrong. However, if all 100 nodes also take a random 1/50th sample of each shard, the chances of one node finding an invalid state or hidden data increase dramatically. Furthermore, because each node only takes on an additional 4/50th of the blockchain due to this sampling (1/50 from each of the four other shards), we are still left with a memory requirement of only 14/50th of the non-sharded blockchain.

In other words, DAS gives up a very tiny amount of the efficiency we gained from sharding our chain and results in an enormous increase in the security of the sharded chain. Sadly, DAS still doesn’t ensure other shards are acting honestly – it just shows a high likelihood of it. Of course, this still isn’t acceptable, and that’s where erasure coding comes into play.

Erasure coding is super complex, but the general idea is to duplicate the data such that if 50% of a dataset is sampled, nodes can guarantee that 100% of the data exists and is valid. The analogy often used to explain erasure coding is as follows.

Imagine you put two random points on a graph and draw a line between them. Now put two more points anywhere on that line, and then erase the line. We are now left with four points and we are given the task to choose two of them to recreate the line. Becuase all the points were on the same line to begin with, it doesn’t matter which we choose. We could choose the original two points, the two added points, or a combination of either. They’ll all result in the same line. Now imagine you have a computer program that can replicate this idea across any type of dataset. This is erasure coding.

Long story short, our group of nodes only needs to sample 50% of an erasure-coded dataset to verify with certainty that all data in the block was made available and no invalid transactions exist.

Hopefully, that made sense… But if not, don’t feel bad.

Erasure coding is incredibly complex cryptography, and I still struggle to grasp it fully. All you need to know is blockchains use Reed-Solomon codes, and if erasure coding is done correctly, we finally have a securely sharded system. Sadly, as you may have noticed, we still have one remaining question: how can we ensure the block producer erasure coded its data correctly?

Proofs

The most common method to ensure the validity of a mathematical equation is a proof. In the case of blockchains, we operate with fraud proofs and validity proofs. Fraud proofs are a little misleading in their name as they aren’t proofs in and of themselves. Instead, they are a mechanism where the open market is incentivized to monitor a specific chain aspect and raise a “red flag” (the actual mathematical proof) when something goes wrong. The incentive goes to whoever flags the issue first, and due to the nature of open markets, we can have relative certainty some profit-motived actor will notice incorrect erasure codes and publish the resulting proof.

Validity proofs offer a more concrete degree of certainty and require block producers to provide a mathematical proof showing they erasure coded correctly. Validity proofs are more difficult to implement (for several reasons not worth getting into) but will likely be the long-term solution to this issue. We’ll discuss each of these in more detail during our section on rollups.

For now, if you’d like to learn more, I’d recommend this article. Additionally, if you’d like to learn more about DAS and Erasure Coding, I recommend starting here and here. Finally, this 40-minute lecture from Ethereum founder, Vitalik Buterin, is probably the best resource available to explain everything discussed above.

TL;DR - Sharded blockchains ensure security by:

Randomly assigning and reassigning nodes to different shards to ensure they can’t collude

Providing each node with an incredibly data-efficient method of double-checking that all shards in the network acted honestly.

As a result, efficient sharding implementations should allow a blockchain to scale its data storage capabilities by up to n(x), where x is the normal capacity of the blockchain and n is the number of shards used. It’s also possible to use sharding to increase transaction throughput, but that requires secure cross-shard messaging and is a topic for another day. Long story short, sharding has tons of potential but is likely the most difficult scaling solution to implement securely. As such, I wouldn’t expect to see a real, secure sharding solution used on any chain for at least a couple of years.

That’s it for this week! Next week, we will get into Part 2 of blockchain scaling, off-chain solutions. Until then, feel free to dive deeper into some of the materials listed below. I look forward to seeing you next week!

Reference Materials

On the Blockchain Trilemma

Alternate Consensus Models

Proof of Stake (Ethereum)

Delegated Proof of Stake (Cosmos)

Proof of History (Solana)

Proof of Coverage (Helium)

Pure Proof of Stake (Algorand)

On Data Availability

On Sharding

While transaction speed and throughput are the top areas of focus, some scaling mechanisms simply aim to increase energy efficiency.

See Proof of Work consensus, and its requirement that all nodes attempt to brute force the same nonce, with the possibility of only one being successful.

Proof of Stake also is key to enabling Sharding, which can potentially increase blockchain data storage AND transaction throughput.

Specifically, RSCs have largely been phased out of modern sharding applications (ie. Ethereum) due to the computational difficulty of constantly requiring nodes to redownload new shard data as they are reassigned. That said, describing them provides nice context and pays homage to the few lesser-known sharding applications still using them.